Tests are a drag. Gamify!

When an infant learns to walk, she falls down often. We have no problems with those stumbles. In fact, we convince ourselves that the child will eventually figure out walking. We even think it’s cute. We take out our camera, record the memorable moment and send it to friends, grandpa, grandma and uncles, who all get warm and fuzzy and sigh a collective aww, so cute.

Falling down and failing to get it right the first few times is recognized as a natural way to learn. The same is true with learning to play the piano, swim, dance, throw a baseball, give a speech. Muscle memory. Keep practicing. You’ll master it soon.

Student Evaluation

Things change in a hurry as the infant grows up and starts going to school. The place where learning is formalized and structured. Now falling down and stumbling are not treated lightly.

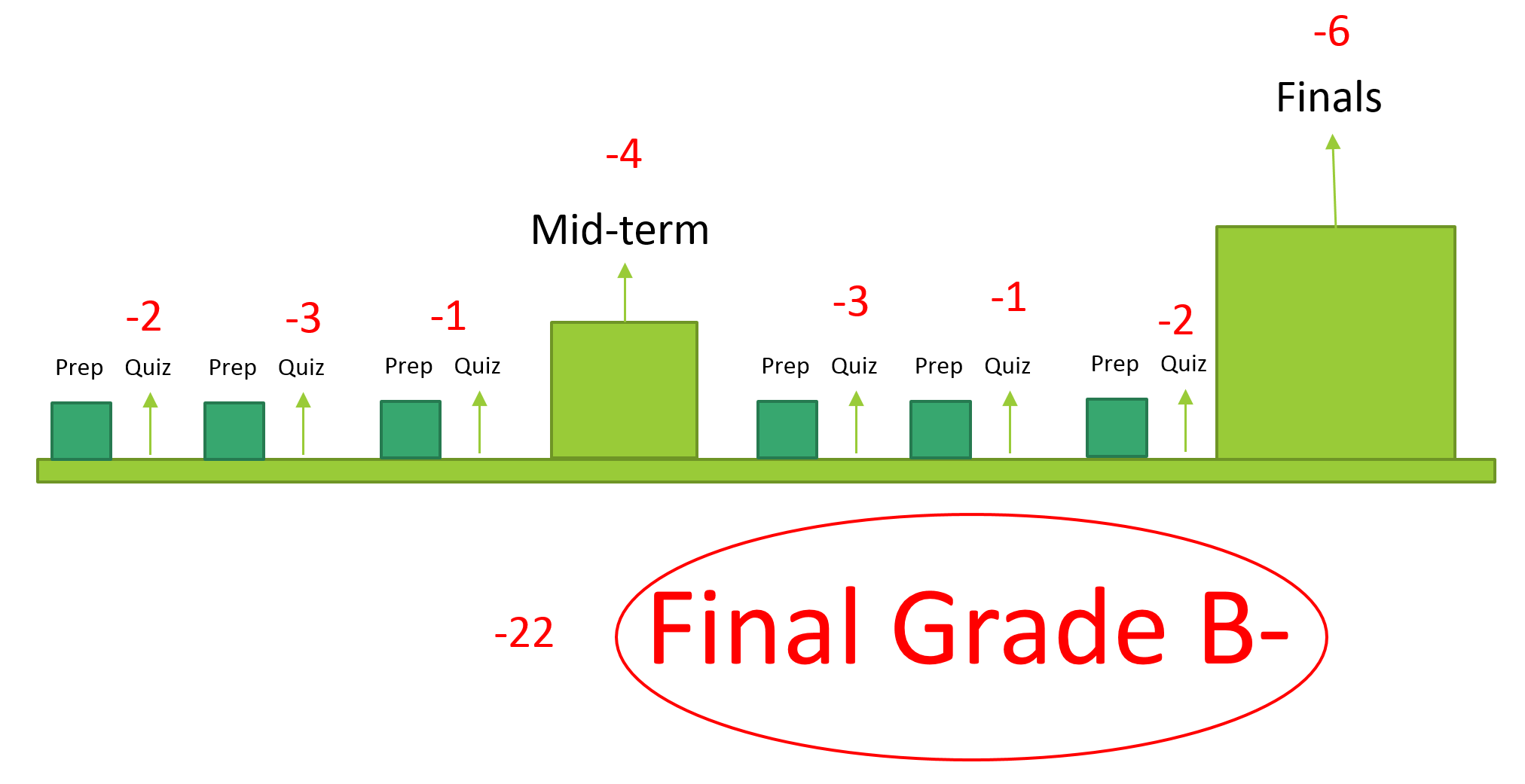

Let’s take an entry level biology class, taken by kids who probably don’t know what biology means. The goal is to make the student knowledgeable in the subject and, at the end of the course, figure out how much the student has learned. The usual evaluation process is through home works, quizzes, mid-terms, more quizzes and finals. The scores from those tests are weighted and added up for a final grade. The more mistakes you make, the more points get taken off from your final score and the lower your grade. In some cases, you might still end up with a better grade if other students did worse than you.

Thus, exams have turned out to be more of a mistake counter than a measure of how much a student knows. Don't know the capital of Burundi? Minus two! It doesn't matter that you know what it is now, now that you have your graded paper back. You'll still be punished for not knowing it the first time.

Can you imagine what this approach would have done to the infant learning to walk? I'm sorry, little Tommy, you're a worse walker than little Tina because Tina fell fewer times while she was learning!

Exam Prep Models

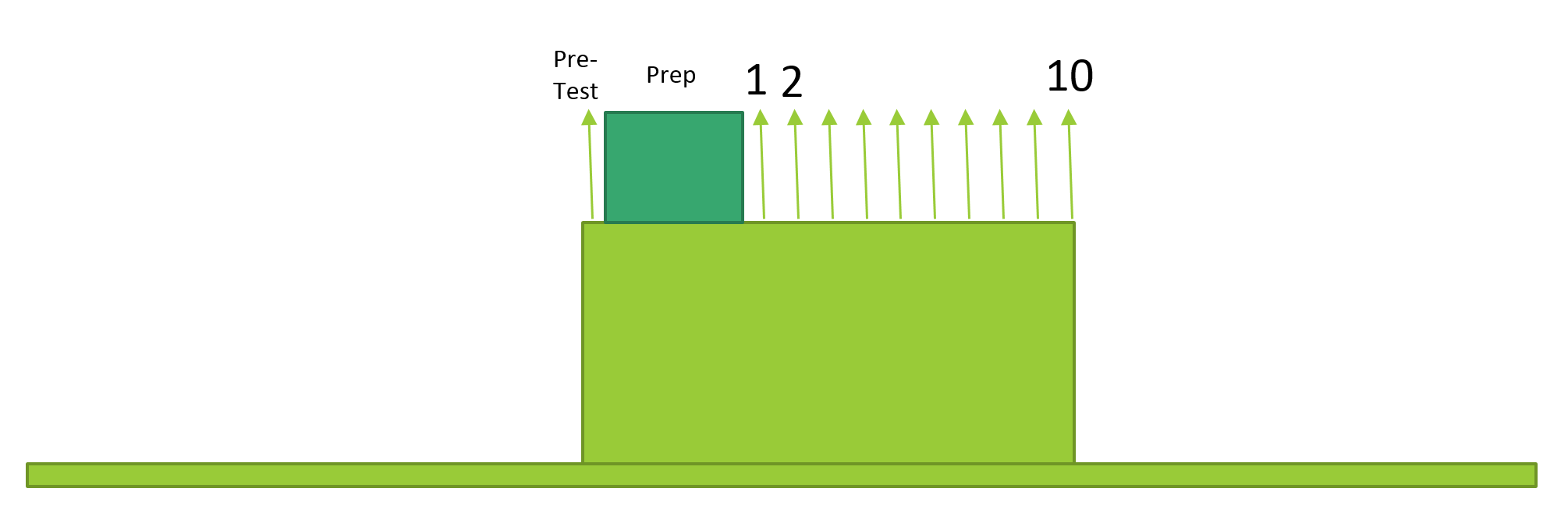

Another model that’s commonly used in prepping a student for a test is to pre-test, find weak areas, provide review material for each of the subareas, and follow it up with a series of model exams. The expectation is that the student will diligently take the tests, self-grade, identify mistakes, review the method to get them right and re-take (or move on to the next test).

Some exam prep books adopt this model. If the questions are comprehensive enough, then scoring well in all the model tests is a good indicator of the student’s knowledge, and the student will probably do well in the real tests that follow the practice tests.

The problem is that the model only works for those students who are already somewhat knowledgeable in the area. If the book is an SAT Math Subject test prep book, the student better have some exposure to most of the concepts (probability, vectors, matrices, functions). If not, she’ll fail the pre-test, the review section will be too cryptic, the problem sets too daunting, and the student will get nothing out of the book or course.

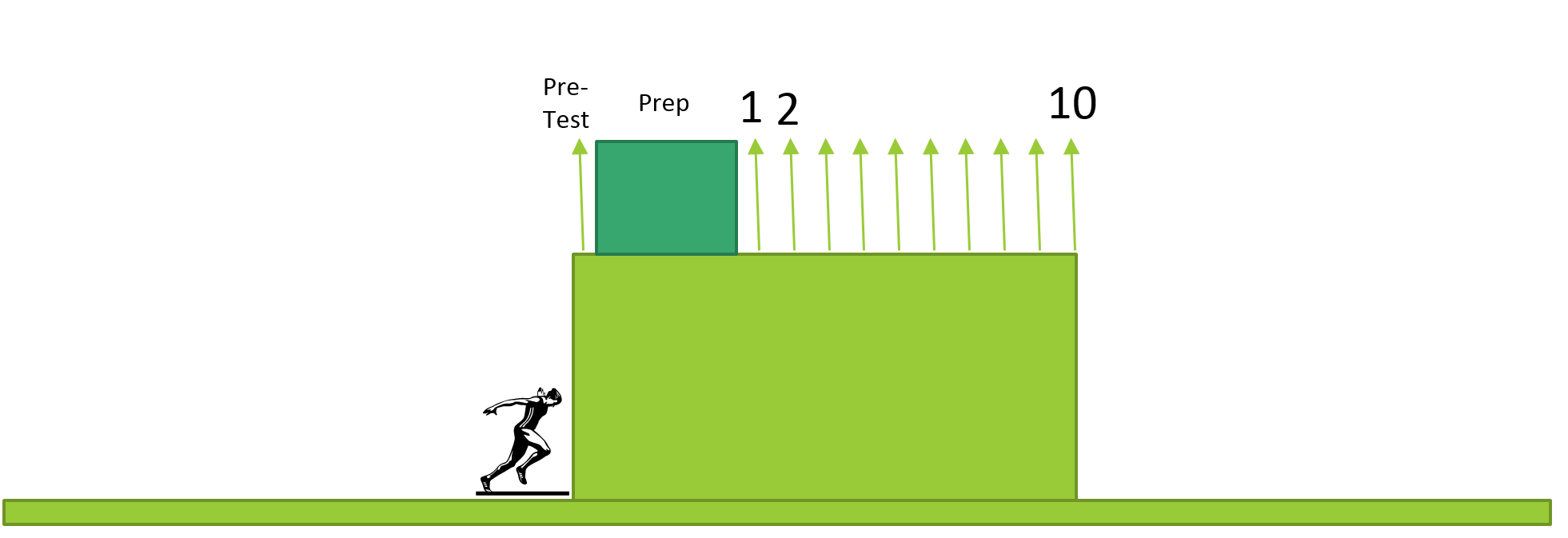

A newcomer to the subject will be unable to climb up the learning wall in order to get to the level of the pre-test.

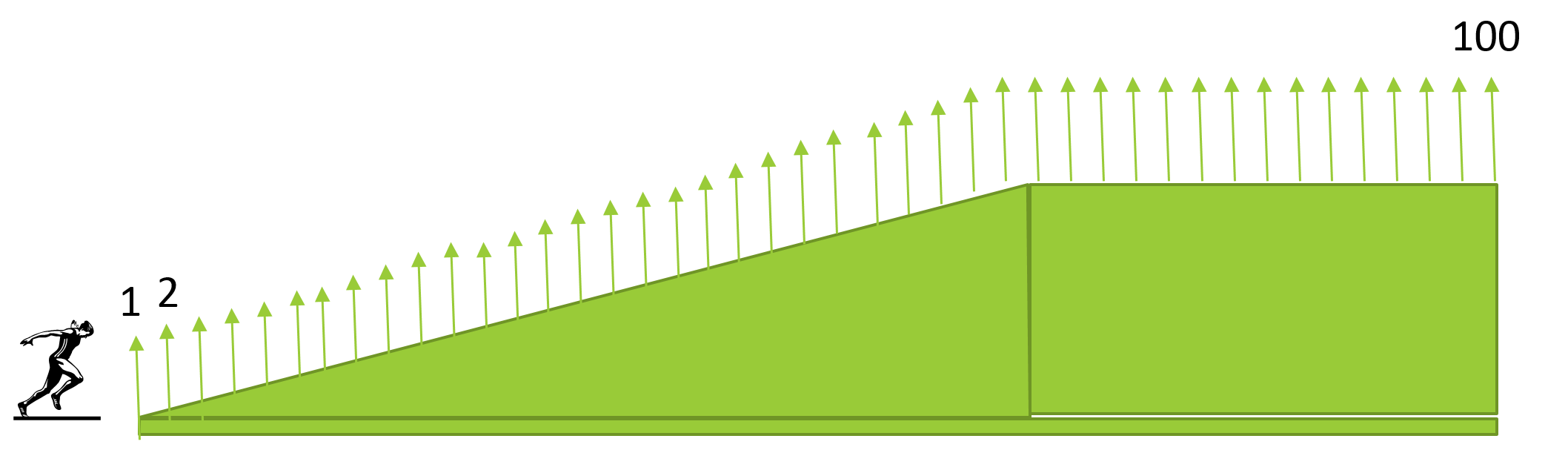

What if we changed the model such that the model tests, instead of being all at the level of difficulty of the real test, started off being easy, and progressively became more difficult?

Like Angry Birds. Or Temple Run.

The Ramp Model has several advantages:

Newcomers aren’t bewildered by the complexity of the questions because they start off with easy ones. The gaming world thrives by making the barrier to entry as low as possible.

Beginners get better even without even realizing it. Just play Bejeweled for a week, and chances are that people will be impressed with your speed and accuracy.

Learners with pre-existing knowledge of the material will quickly run up to their own level and train from there.

The method also clusters the students at various levels, enabling them to play each other, should the gamification extend to a multi-player model.

Ratings

What if we come up with a number system (or a descriptive set of tiers) to represent the current skill level of a learner? Ratings are used in various sports and media – chess (ELO), tennis (ATP), etc. The rating system will allow us to gamify the learning process by creating games that are played between two players whose ratings are close. Alternatively, an introverted student can play against a computer that is playing near the level of the student.

OK, maybe the rating system can help someone prep for an exam. But how can we tell if a student passed or failed an exam? We still need to determine a learner's skill level based on his performance on a given test, right?

Maybe not. What if the rating was the measure of someone's knowledge? Perhaps, someone with a rating of 1000 in Economics could be deemed as having passed the high school level course ("the coveted four figure rating"). Then, as the rating improves, the student moves up to become a graduate, master and 'grandmaster'. The existing grading system (A, B, C, etc.) is too quantized and localized. A B+ in a University level course may not be the same as the same grade in high school or from another University. Gamifying the process would motivate a wider range of students to improve, and they’ll find their own place in the rating system.

We allow a child to fall as many times as needed to ‘graduate’ to a walking level. Some kids do it quickly, others need more trial and error. In the end, I don't think the quality of walking depends on the number of falls in the learning process.

Similarly, exams should not be a one-time test. The danger there is that you might be sick on that day, or be in a bad mood. If so, you will perform a lot worse than your knowledge level.

I should be allowed to take the exam as many times as needed in order to cross a rating of X. Then I have passed. As long as the questions each time are randomized to the same collective degree of difficulty, taking it successively will only improve retention. Some students might pass it the very first time. Others might take several attempts to pass.

Like little Tommy trying to walk.